Apple, Facebook and the Global Race for Privacy

People always cared about what companies do with their information. Now, companies will have to care too.

In the fall of 2007, I was working at an ad agency and met with a Facebook sales rep. He was pitching us a new social commerce idea, one where you could notify your friends of purchases you just made. As an advertiser, it sounded neat. Myspace was still the predominant social network of the day by a wide margin and Facebook was the younger sibling that was always willing to push the envelope technologically to get its share of the ad budget.

This product had a name that has since become infamous: Beacon. What ensued shortly after the launch of Facebook Beacon was a scandal. The very day it made its debut, tech journalist Om Malik asked, “Is Facebook Beacon a Privacy Nightmare?” Security researchers had discovered that these personal browsing activities were being reported back to Facebook even when they opted out and worse yet, even when they were logged out. In less than a month, the New York Times reported that 50,000 Facebook members had signed an online petition objecting to the program and Facebook responded by reining in the offering. A marriage proposal was partially ruined when the engagement ring purchase was broadcast to the future husband’s friends, which ultimately led to a $9.5 million class-action lawsuit settlement. In less than 2 years, this iteration of Facebook Beacon was dead.

There were two telling quotes from the NY Times article. One referred to Facebook’s refusal to offer a universal opt-out from the program that users requested by then Facebook vice president and current billionaire SPAC king Chamath Palihapitiya. Another was from a Facebook user who started her comment with, “We know we don’t have a right to privacy, but[…].” In many ways, these two quotes set the battle lines between corporations and users and the implied social contract one had with the other.

While the initial rollout of Facebook Beacon was bungled, it would define the strategy behind Facebook’s rapid ascent: utilizing its identity layer to capture off-platform information that became disproportionately valuable to Facebook. Facebook’s power lies not in the social network itself but in its ability to de-anonymize external data streams. Facebook Pixel, its current tracking solution which is employed by countless advertisers, apps and websites is often referred to as a beacon.

To be clear, this is not a singular indictment of Facebook, but it has been at the tip of the spear in designing products that pushed the envelope around ad targeting. It has rolled out many clever and innovative ways of capturing external data about its users. As Facebook grew and ran away with 20% of the global digital advertising market, it has forced many of its competitors to do the same. In 2018, Google Chrome made a “seemingly small change” that allowed Google to capture all the browsing history of users who logged into Gmail. The advertising industry is now obsessed with implementing an identity layer on top of all ad-supported internet traffic. Facebook paved the way towards a de-anonymized internet and has taken the brunt of the PR damage in return for a lion’s share of the gains.

We have been accustomed to clicking “I Accept” whenever a 20-page click-wrap agreement presents itself when installing a software program. Legally, we’re told that we should have read all 20 pages, understood all of its intricacies and implications and decide on our own free will to enter into an agreement to obtain access to a company’s product. In practice, we really have no choice if we want to use a particular product. We play ball on their terms. Websites and apps took advantage of this behavior with the same “I Accept” windows, except that the rules often change after you sign up and your data might be processed in drastically different ways after you have invested tons of time and effort. With traditional software, you could simply decide not to upgrade to the new version if you didn’t want to accept the new terms and conditions. By and large, this is not an option that’s available to most consumer internet services.

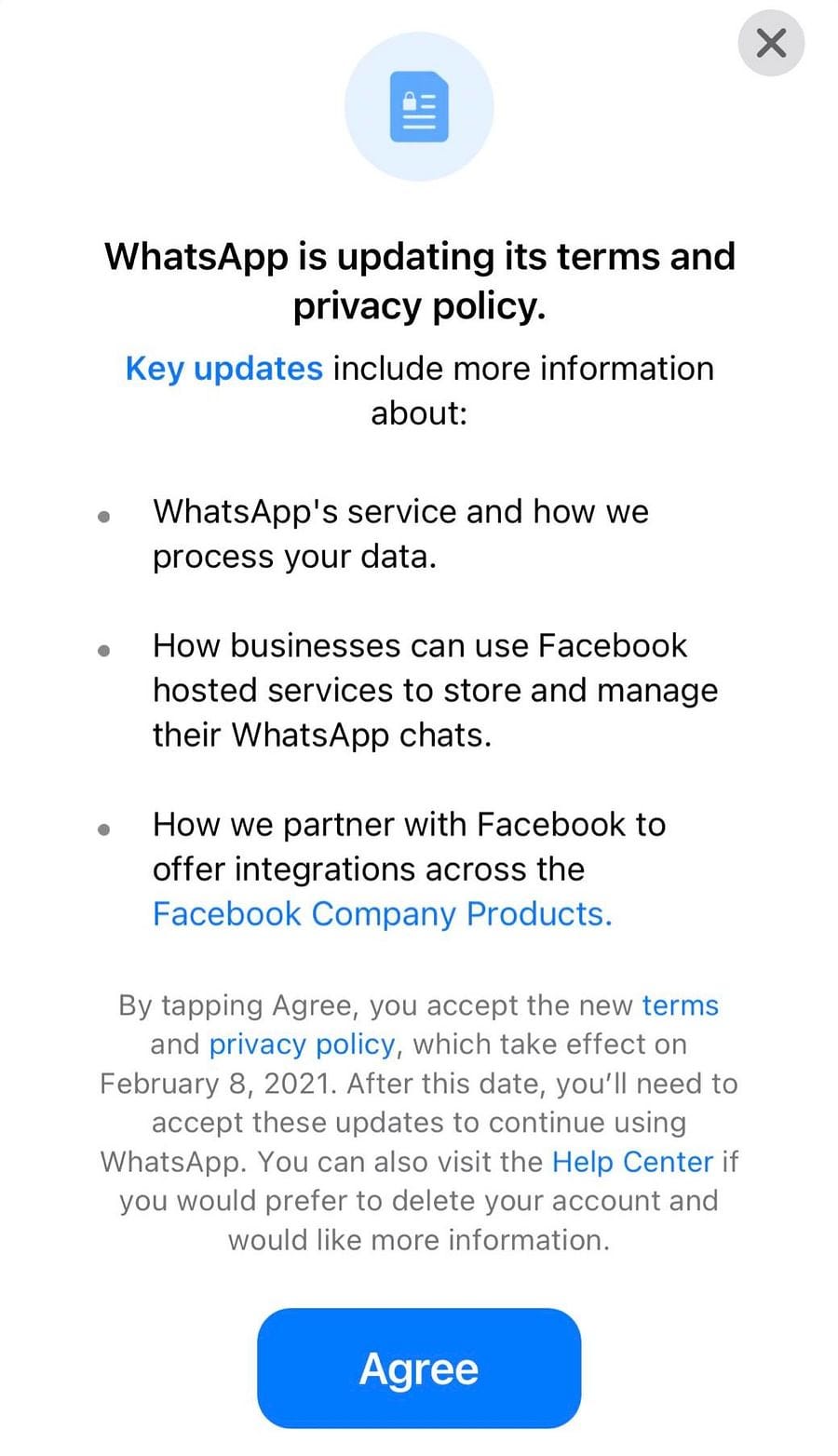

Facebook, once again, provides a recent example of this phenomenon. In 2014, they paid nearly $16 billion for WhatsApp, a product that made $16 million in the 6 months leading up to its acquisition in 2014. At the time of acquisition, WhatsApp made money by charging its users $1 from the second year onward. Facebook was never going to make its money back if it stuck to this model and surely the founders of WhatsApp must have known this too. It should come as no surprise then, that almost 6 years after the acquisition, Bloomberg reported that “WhatsApp Users Can No Longer Avoid Sharing Data With Facebook.” Mirroring the strategy they tried 14 years ago, Facebook would once again refuse to offer an easy opt-out. In fact, this time, there was none.

This Time, It’s Different

Unlike the Beacon rollout, your friends would be none the wiser with the new WhatsApp privacy policy changes, but your overall ad targeting profile at Facebook might be. The reaction, once again, was swift. A change to WhatsApp’s terms of service and privacy policy was announced on January 4th. By the 15th, these changes would be delayed. In the 10 hectic days between the announcement and retreat, a few things happened:

Elon Musk touted Signal, an open-source messaging app funded by WhatsApp co-founder Brian Acton, to his Twitter followers. This added 26 million downloads in India alone (WhatsApp’s largest market). Signal would shortly race to the top of the US iOS App Store charts.

Trust in Facebook was so low that disinformation spread rapidly regarding the overall security and privacy of WhatsApp, including unsubstantiated rumors that Facebook/WhatsApp could now read user messages and listen in to conversations.

Turkish president Erdogan announced that he was moving his WhatsApp chat groups to state-owned BiP.

WhatsApp rival Telegram reported that in a span of 72 hours, they added 25 million new users and Telegram CEO Pavel Durov referred to the exodus as the “largest digital migration in history.”

What was once a minor rebellion from 50,000 members in an up-and-coming social network had returned as a massive global defection that included multiple heads of state. Ironically, most WhatsApp users were already sharing their information with Facebook since 2016.

So what changed between 2016 and 2021? Perhaps the biggest story buried amidst the 2021 WhatsApp privacy scandal was the fact that European users were exempt from the forced data sharing that the rest of the world was told to prepare for. Unlike 2007, there was now an expectation of privacy rights from users, at least those in Europe.

Most Favored Nations

In 2016, the European Union adopted the General Data Protection Regulation (GDPR). German experience with the horrors of surveillance, first with Nazi Germany and later with the East German Stasi, was the driving force that led to some of the world’s first information privacy laws in 1970 and ultimately to the most comprehensive and impactful privacy legislation in the world. For the first time, users had digital privacy rights that were guaranteed by the government with the full force of heavy fines behind it.

While GDPR had good intentions, it had massive loopholes and lots of gray areas that resulted in an unpleasant experience for users. In advance of its enforcement in 2018, inboxes were flooded with emails asking for explicit permission to continue communications. They were also bombarded with consent windows asking them for permission to use cookies in an age where cookies were quickly going obsolete. Research I conducted and later presented to the Office of the California Attorney General showed that it was way more difficult to opt out of data collection than it was to opt into data collection in Europe thanks to the use of dark patterns, which were not explicitly regulated under the GDPR.

GDPR’s most important contribution is that its core concepts laid a foundation for other countries and corporations to build upon. Other countries, including Brazil, Australia, Japan, Thailand, Chile, India and South Africa have since adopted national privacy laws of their own based on this framework. The EU’s willingness to levy fines coupled with the market power of 500 million consumers has allowed the EU to obtain a level of leverage against Facebook that nobody else had. However, this unique leverage has set up an uneasy dynamic among citizens outside of the EU. It led many to the ask, “Are we second class digital citizens with less privacy rights?” The idea of separate privacy standards for Europe and India did not sit well with many Indians. Teeing up the government for an easy political win, the Indian technology ministry asked WhatsApp to withdraw the privacy policy change, stating,

This differential and discriminatory treatment of Indian and European users is attracting serious criticism and betrays a lack of respect for the rights and interest of Indian citizens who form a substantial portion of WhatsApp’s user base.

India won’t be the last country to feel offended by the disproportionate treatment of its citizens. What the GDPR set off was a global arms race towards privacy rights. The eventual outcome will be a global baseline of privacy rights that most people around the world can rely on.

What About Us?

One glaring omission from the list of countries with national data privacy laws is the United States. How long will it take before we realize that we have become second class digital citizens? California has been at the forefront of privacy legislation, first with the passage of the California Consumer Privacy Act (CCPA) and more recently with the passage of the California Privacy Rights Act (CPRA). These pieces of legislation were unique because they arose under pressure from concerned citizens who gained significant support from voters.

Alastair Mactaggart, a political novice by his own admission, had a discussion with a Google engineer who told him that he would be horrified if he knew the amount of data they were collecting on users. Alastair worked with privacy researchers including Ashkan Soltani to craft legislation that improved upon loopholes in the GDPR while still staying legal as a state law. It spurred a ballot initiative that led to the passage of the CCPA. I moved back to California in 2019 to participate in the CCPA rule-making process and my comments to the attorney general’s office led to the introduction of one of the first regulations that aimed to inhibit dark patterns in the collection of consent. But still, despite our best collective efforts, the CCPA and CPRA are far from perfect. The regulatory ability to prevent dark patterns, which refer to psychological design tricks used to trick or force users towards an intended behavior, will ultimately determine the success or failure of privacy legislation. In the era of consent and #MeToo, we really must ask ourselves, does a forced “yes” really mean yes?

California will play the same role in the US that the EU played for the rest of the world. Data privacy laws have since passed in Nevada and Maine and 23 other states are currently going through the legislative process to create their own data privacy laws. With at least half the country considering a data privacy law, it shouldn’t be long before we see a substantive draft of a national privacy law being debated on the floor of Congress.

Previewing the Global Consent Standard

While it remains to be seen if privacy legislation can hit the mark on its own, companies are already leveraging the theme of privacy as a key differentiator. Chief among these companies is Apple, which has led the marketplace in providing transparency about the types of data that apps collect and track and a truly easy way for consumers to opt out of certain types of data collection in iOS apps. It could easily be argued that Apple has done more to bring effective widespread data privacy protection to consumers around the world than any privacy law has thus far. This notion would have been absurd just 8 years ago.

Apple’s approach to privacy has at differing times been cynical, convenient, brilliant and innovative. After Edward Snowden’s NSA leaks in 2013 implicated Apple as a participant in the PRISM data collection program, Tim Cook sought to aggressively differentiate itself from Google and Facebook, two other alleged participants in the program. Apple bolstered its privacy credentials with many innovations, including the use of differential privacy in its data analysis, a way of processing data in bulk to glean insights without having direct knowledge about the data points tied to any single individual or device. By 2018, Apple’s reputation around privacy had made more than a full recovery, with Fast Company declaring that Apple’s best product is now privacy.

While many consumers now had a sense that their Apple was safeguarding their privacy as best they could, the truth was a bit murkier. When the press started to peel back the onion to understand just how the app economy worked, they discovered that iOS apps were still a haven for tracking and third-party data sharing despite Apple’s fervency about privacy protection. An investigation from The Washington Post revealed that 5,400 tracking events were sent from a reporter’s phone over the span of a week, mostly coming from the hidden SDKs (embedded pieces of code) inside his iOS apps. The NY Times did a deep dive into location data collected by these SDKs and was able to tie back data points collected from a single device to a specific person. The Wall Street Journal revealed that iOS apps were sending sensitive medical data including body weight, blood pressure, menstrual cycles and pregnancy status to Facebook. The Atlantic now spoke of Apple’s Empty Grandstanding About Privacy. The reality is that while Apple always had a very strict stance against third-party tracking in its Safari browser where it made no additional revenue, it cultivated a relatively lax environment when it came to its $519 billion iOS app economy, where cross-app tracking was enabled by default and SDKs were constantly sharing information with third parties.

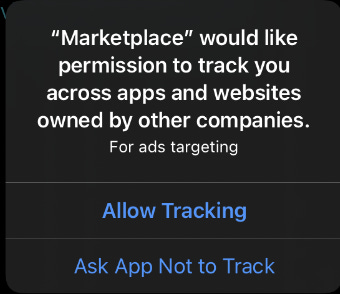

Last year, under siege again around its privacy practices and with public awareness of privacy laws such as the CCPA rising, Apple took advantage of the moment to announce a slew of features that would give most users an easy way to stop in-app tracking for the very first time. These features included data privacy “nutrition labels” showing users which pieces of information apps were using to track them, prompts that alerted users when apps were continuously collecting their geolocation data and the most importantly, a simple opt-in/opt-out mechanism that allows users to decide whether they want to be tracked across different sites and apps.

The unifying theme behind all these features is transparency. Users now have insights into how their apps were processing their information. The most important feature, which is called the AppTracking Transparency Framework (ATT), accomplishes what years of privacy legislation hasn’t yet achieved: a widely used consent prompt that doesn’t utilize dark patterns. It is just as easy to say yes to tracking as it is to say no. The rollout of ATT will have users around the world accustomed to a privacy prompt that doesn’t suck.

Fallout and Adaptation

ATT is the biggest threat to Facebook and the status quo of the digital advertising ecosystem. Facebook took out newspaper ads last year complaining that the ability to easily opt out of cross-device tracking will jeopardize the livelihoods of small business owners. Apple has been under repeated pressure to delay and modify ATT and did indeed delay the rollout last September. This decision undoubtedly saved the Q4 growth numbers of many companies who rely on the free-flowing data environment of iOS to drive user acquisition. Bumble, a dating app, says it expects 0-20% of users to opt in to tracking in iOS and expects their cost of user acquisition to go up.

This past week, Tim Cook gave a speech on International Privacy Day that clearly outlined Apple’s stance against the digital advertising industry as it exists today.

Technology does not need vast troves of personal data stitched together across dozens of websites and apps in order to succeed. Advertising existed and thrived for decades without it, and we're here today because the path of least resistance is rarely the path of wisdom.

Facebook responded by accusing Apple of having ulterior motives as a competitor on its quarterly earnings call. These changes will likely cost Facebook and Google billions in revenue this year.

The spigot of data from iOS apps will change drastically once ATT is rolled out sometime this spring. Marketers are already scrambling to adapt to changes in Facebook’s ads program. In return, Facebook and other large platforms will find ways to replace the loss of this data by bringing more tracking in-house. The key piece of data that is threatened by these changes is the ability to easily track a sale/lead and to link that back to a particular ad served to a specific user. I predict that Facebook will aggressively start pushing its own on-platform shops so that this entire process can be tracked within their walled garden. It should be noted that there is still no easy way to turn off all targeted ads within Facebook due to the extensive use of dark patterns.

Users are now entering a world where they don’t have to always click “I Accept” in order to use a product or service. In a world absent of forced yeses, companies will have to reconsider why and how they collect and store user data and who they share it with. These changes are welcome at a time where the internet is entering a dangerous phase. Facebook’s obsession with collecting real-world identities and building advertising products that offered advertisers easy access to this identity layer has pressured its competitors large and small to do the same. The end-state of this race for identity is an internet where you are always logged in and never anonymous. Companies that require real-world identities to function properly, especially social networks, should be smarter about the data and metadata they choose to collect on a particular person in an age where data breaches are becoming increasingly common.

The NSA’s PRISM program cost only $20 million per year in 2013. What’s left unsaid is that the rest of its surveillance infrastructure was heavily subsidized by ad revenue, paid for by advertisers and ultimately by consumers themselves. The US Defense Intelligence Agency recently admitted to buying smartphone location data and was able to review data on US citizens without needing a warrant. Consumers will ultimately have to decide if they want to indirectly pay to be surveilled. Apple might be the first company to make a fortune promoting privacy as a key brand value, but it won’t be the last.